🔬🌍 Environment and Breast Cancer: A Major Step Forward from a Strasbourg Research Team

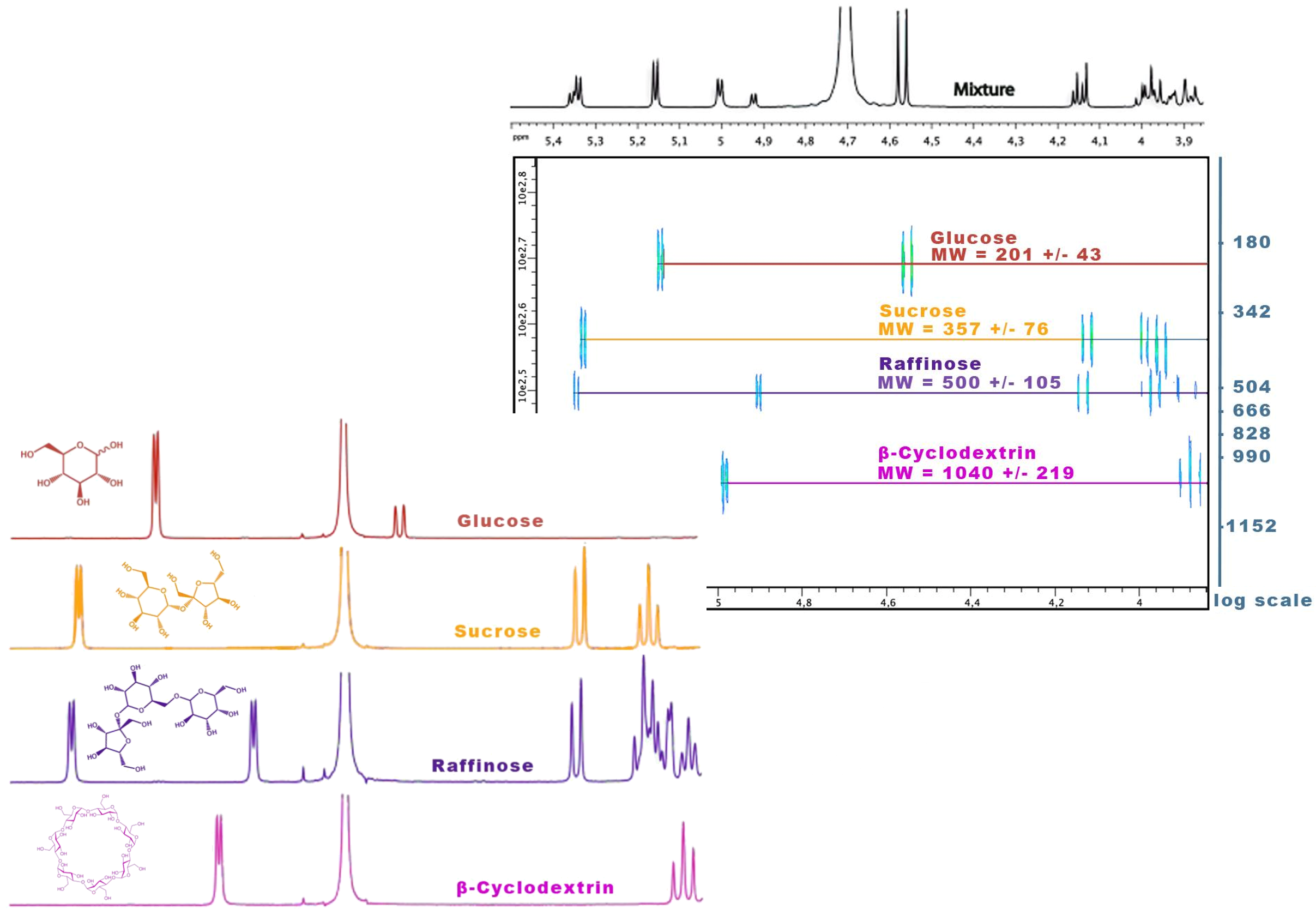

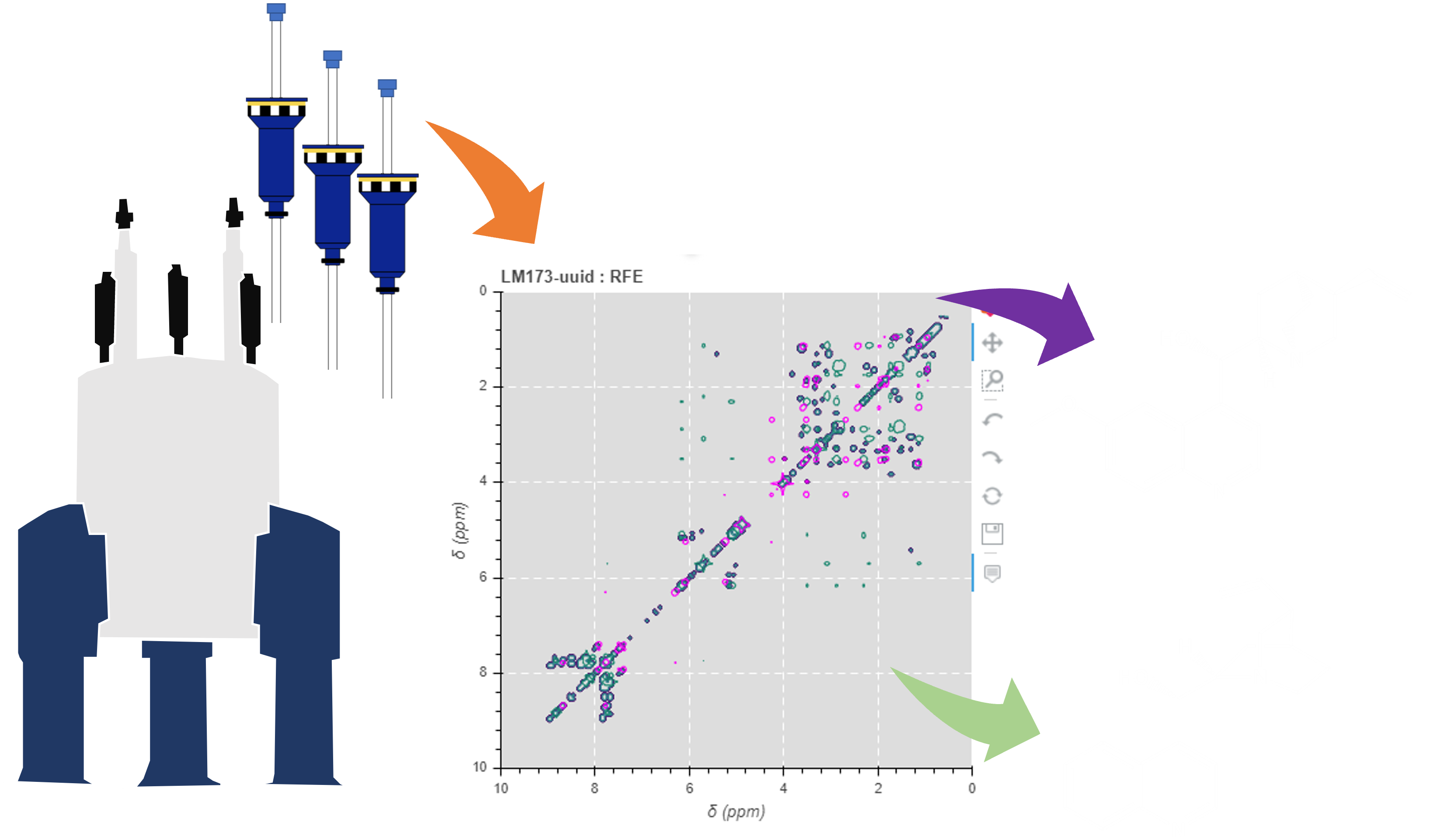

In October 2025, at the 31st International Senology Meetings in Strasbourg, the team led by Prof. Carole Mathelin (ICANS | Institut de cancérologie Strasbourg Europe) presented preliminary findings showing a link between environmental exposures and breast cancer.